Every hybrid cloud migration we've been brought into mid-flight has had the same underlying problem, and it's never been about the tooling, the cloud provider, or the team's technical ability. The team committed to an approach before anyone clearly defined what the migration needed to accomplish.

By the time we're in the room, the cloud bill is higher than before they started, on-prem is still running in parallel with no cutover date, and engineering capacity has been split between migration and product work for so long that neither is moving well. Nobody is talking about finishing the migration anymore. They're trying to figure out how to recover from the way they started it.

This article is a decision framework for engineering leaders planning a hybrid cloud migration. We cover the business decisions that determine whether your migration finishes on time and on budget, or becomes the kind of project that quietly consumes a year of engineering capacity with nothing to show for it.

Where Hybrid Cloud Migrations Go Wrong (And What It Actually Costs)

Most engineering leaders don't choose their migration approach based on fit. They choose based on what's available, what's budgeted, and what feels defensible to stakeholders. That decision, made before a single workload moves, is where most migrations go wrong.

We've seen all three common approaches fail for predictable reasons:

The common thread across all three isn't lack of effort, but the wrong expertise arriving at the wrong stage of the migration.

Key Insight: With internal teams, the instinct when things slow down is to add more engineers. But that usually makes things worse. Bigger teams mean more coordination overhead, more misalignment, and more time spent rebuilding context. The problem was never headcount

These failures get expensive fast because the costs compound. Engineering capacity splits between migration and product work, so both slow down. On-prem and cloud run in parallel with no clear cutover criteria, and you pay full cost for both every month the migration stalls. When the first attempt doesn't hold up, the organization brings in outside help anyway, under a tighter deadline and at a higher cost than if they'd made that call at the start.

Why Hybrid Cloud Is Different From Cloud Migration

This is where we see experienced cloud teams underestimate the challenge. They've migrated workloads before, they're comfortable with AWS, and they assume hybrid is just a partial version of what they've already done.

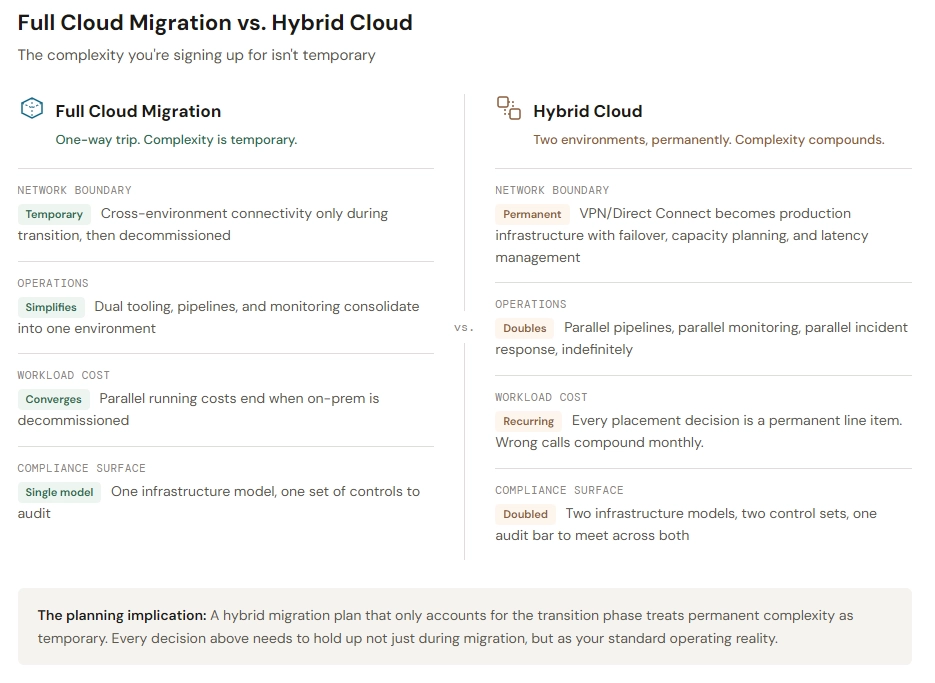

A full cloud migration is a one-way trip. You move everything, decommission on-prem, and operate in a single environment. Hybrid is fundamentally different because the complexity isn't temporary. You're committing to an architecture where two environments coexist permanently, and every decision you make has to hold up under that reality.

The Network Boundary Is Permanent Infrastructure

In a full migration, cross-environment networking is a temporary challenge during transition. In hybrid, the connectivity between on-prem and cloud is a permanent production infrastructure.

We've seen hybrid architectures that sailed through staging and buckled under real production traffic because the network layer wasn't stress-tested with realistic cross-environment patterns. The challenges that catch teams off guard:

- Latency between environments behaves differently at scale than in a proof of concept, and the on-prem side has physical constraints that don't flex on demand

- VPN and Direct Connect reliability becomes a production dependency, meaning failover and capacity planning need the same rigor as any other critical system

- DNS and service discovery across environments adds operational complexity that pure cloud setups never encounter

Unlike cloud networking, which is software-defined and flexible, the on-prem side of a hybrid boundary has hard physical limits. The intersection of the two introduces failure modes that neither environment has in isolation.

Your Team Permanently Operates Two Different Worlds

This is the cost that's hardest to see before you're living it. Hybrid means your team is running two environments with different tooling, different failure modes, different scaling behaviors, and often different security models. Not temporarily during migration, but as the standard operating reality.

Incident response changes fundamentally. When something breaks, the debugging path crosses an environment boundary. Is the problem in the cloud layer, the on-prem layer, or the connectivity between them? Teams used to single-environment troubleshooting lose hours on cross-boundary issues they've never encountered before.

Deployment processes are split. What works for cloud-native services doesn't apply to on-prem workloads, so your team maintains parallel pipelines, parallel testing procedures, and parallel release processes.

Monitoring and observability need to span both environments with unified dashboards. Gaps in cross-environment visibility are where outages hide until they become full incidents. We had a client spending $15,000 a month on observability tooling across their environments because they'd turned on every default metric without asking which ones actually informed decisions. After auditing what they needed for SLOs, paging signals, and runbooks, we brought that down to $800. Same visibility into the things that mattered, dramatically less noise and cost.

Workload Placement Has Permanent Cost Consequences

In a full migration, you eventually decommission on-prem and pay for one environment. Hybrid means you're paying for both permanently. Every workload placement decision directly affects your ongoing cost structure as a recurring line item, not a one-time migration expense.

Getting placement wrong hurts in both directions:

- Moving a workload that should have stayed on-prem creates compliance risk if it has data residency requirements, and performance problems if it depends on low-latency access to on-prem systems

- Leaving a workload on-prem that should have moved means paying for capacity that would be cheaper, more scalable, and easier to maintain in the cloud

These decisions are always evolving—as your business changes, workload requirements shift. We've seen this play out enough times that we build for flexibility of change rather than optimizing for any single variable. If you design your hybrid architecture around today's cost structure or today's compliance requirements and assume those will stay the same, you'll end up locked into decisions that compound costs the moment priorities shift.

Compliance Spans Two Different Control Models

For organizations with regulatory requirements, hybrid doubles the audit surface. Access controls, encryption standards, logging, and data handling all need to meet the same compliance bar across two fundamentally different infrastructure models.

UKi's path toward FedRamp certification required every compliance control to be implemented and auditable across both its commercial and GovCloud environments. That's a very different challenge than meeting compliance standards in a single environment, and it's the kind of requirement that surfaces halfway through a migration if nobody accounts for it during planning.

How to Structure a Hybrid Cloud Migration That Holds Up

Regardless of who executes the migration, these conditions separate the ones that finish cleanly from the ones that drift indefinitely.

Map Workload Placement First

This is the decision with the longest tail in a hybrid migration. Your networking architecture, security model, cost structure, and operational model all flow from which workloads live where.

Keep on-prem when:

- Regulatory data residency requirements mandate it

- The workload is latency-sensitive with deep dependencies on on-prem systems

- A full rewrite would be required to migrate, and the cost isn't justified by the benefit

Move to cloud when:

- There are no residency constraints or meaningful on-prem dependencies

- The workload generates ongoing infrastructure costs that compound when left in place

- Cloud-native capabilities like autoscaling, managed services, or global distribution would materially improve performance or reduce operational burden

Map this deliberately and early with both engineering and business stakeholders in the room. A structured cloud migration and integration approach prevents placement decisions from being made by default rather than by design.

Validate the Cross-Environment Architecture Before Commitment

The most common technical failure we see in hybrid migrations is a network and connectivity layer that was validated in a controlled environment and assumed to be production-ready.

Before workloads move at scale, your cross-environment architecture needs to be tested under real conditions:

- Production-level traffic volumes across the environment boundary, not synthetic benchmarks

- Failover scenarios where the primary connection drops and traffic reroutes

- Latency under load for the specific workloads that will communicate across environments

- Monitoring and alerting that spans both environments with unified visibility

This is where a proof-of-concept phase pays for itself many times over. When STEM Learning needed to migrate from on-prem to cloud-native under a three-month deadline, we didn't start by moving workloads. We delivered a proof of concept in three weeks that validated the architecture, then had a staging environment ready by week seven. That early validation meant STEM Learning's migration was completed ahead of schedule, under budget, and achieved full availability in its first month live.

Starting with a time-boxed proof of concept isn't just risk management. It's how you surface the cross-environment problems that would otherwise show up six months in, when fixing them is expensive and disruptive.

Define Cutover Criteria Before Parallel Costs Accumulate

Migrations run in parallel indefinitely when nobody has defined what "ready to cut over" means for workloads moving to cloud. Before the first workload moves, establish the specific signals that indicate readiness:

- Performance benchmarks validated under production-level load

- Security controls verified and auditable across both environments

- Load testing thresholds met consistently, not just once

- Rollback procedures tested and documented

Without these being written down and owned by a specific person, both environments keep running, and the dual-running cost compounds month after month. Cloud modernization work without defined cutover criteria produces this outcome almost every time.

Decommissioning on-prem workloads too early is a genuine risk. But never decommissioning the ones that should move, which is what happens when cutover criteria are vague or absent, is equally costly and far more common.

Plan for Permanent Dual-Environment Operations From Day One

This is where hybrid migrations differ most from full cloud migrations. In a one-way migration, operational complexity is temporary. You run both environments during transition, then simplify down to one. In hybrid, the dual-environment model is permanent.

Your migration plan needs to account for long-term operations from the beginning:

- Incident response procedures that account for cross-environment failures and clearly define escalation paths when the problem spans both environments

- Deployment pipelines that handle both cloud-native and on-prem workloads without requiring the team to context-switch between entirely different toolchains

- Security and compliance processes that maintain consistent standards across both environments while respecting the different capabilities and constraints of each

- Cost monitoring that tracks spend across both environments and flags workload placement decisions that are costing more than they should

Teams that focus exclusively on the migration phase and treat operations as something to figure out later end up with two environments they can barely manage. We've walked into exactly that situation multiple times, and the remediation always costs more than building the operational model correctly from the start.

The Build vs. Partner Decision for Hybrid Cloud Migration

When a prospective client comes to us about a hybrid cloud migration, we don't start with their architecture. We start with a question that catches most teams off guard.

"Walk me through how your infrastructure works right now."

We're not listening for technical accuracy, but rather confidence and clarity. If the team's understanding is 60–70 percent right and 30–40 percent wrong, that's a sign they've built beyond their ability to reason about what they have. That matters for hybrid migration specifically because you're not replacing the current system. You're extending it into a second environment and operating both together.

If your team doesn't fully understand what they have today, the hybrid version will be harder to operate and harder to debug when things go wrong.

Assess Your Team’s Hybrid-Specific Experience

Cloud experience and hybrid migration experience are different skill sets, and this distinction matters more for hybrid than for any other infrastructure project. The specific gaps that stall hybrid migrations:

- Cross-environment networking. Your team may be comfortable with cloud networking, but managing persistent connectivity between on-prem and cloud, with VPN failover, Direct Connect capacity planning, and latency management under production load, is a different discipline.

- Security across two live environments. RBAC, secrets management, and audit logging are fundamentally harder when the boundary between environments is a permanent architectural feature rather than a temporary migration state.

- Cost architecture for split workloads. This requires understanding not just cloud pricing but the long-term cost implications of every placement decision across both environments. Getting it wrong isn't a one-time mistake. It's a recurring expense.

- Dual operational models. Your team will be running two sets of deployment pipelines, two monitoring stacks, and two incident response procedures. If they haven't done that before, the operational overhead will be significantly higher than expected.

The question isn't whether your team is capable. It's whether anyone on the team has planned and delivered a hybrid migration end-to-end. STEM Learning faced a three-month deadline and engaged us early rather than attempting it internally. The result, delivered ahead of schedule and under budget, is what direct hybrid migration experience applied to a hard deadline looks like in practice.

Run the Real Cost Comparison

Most teams weigh the cost of a partner against the cost of doing it internally. That comparison misses the scenario that actually plays out most often:

The internal team attempts the migration, makes partial progress over six months, hits cross-environment networking or compliance roadblocks, and the organization brings in outside help anyway. But now the timeline is tighter, the budget is partially spent, the team is fatigued, and the existing work may need to be partially undone before anyone can move forward.

We had a client come to us after their internal estimates for replacing a critical platform dependency were "2 to 3 years, 8 to 10 engineers, maybe more." We scoped it as a time-boxed experiment with three engineers, three months, and low risk. By month four, it was production-ready and maintainable by their team. The cost of our engagement was a fraction of what continuing down the internal path would have consumed.

Know What to Look for in a Partner

If you decide outside help is the right call, the single most important thing to establish is whether the engineers who scope the work are the same ones who build it. Strategy without execution accountability is exactly the consultancy failure we described earlier.

Beyond that, look for:

- Specific measurable outcomes from past engagements. Not polished marketing case studies, but numbers a CFO would recognize. How long did the migration take? What happened to infrastructure costs? Did they meet the compliance deadline?

- Implementation depth. An AWS Advanced Tier Partnership or Kubernetes Certified Service Provider status signals real expertise, but only if the certified engineers are the ones doing the work on your engagement.

- A defined 90-day outcome. Any partner who can't tell you what success looks like in measurable terms before the engagement starts won't be accountable for it when it ends.

- Knowledge transfer built into the engagement. The goal is for your team to own and operate the infrastructure independently after the partner leaves. If their model requires ongoing involvement to keep things running, you've traded one dependency for another.

Questions to Ask Before You Commit

Before choosing an approach, whether internal, partner-led, or hybrid:

- What is your compliance deadline, and does your current plan realistically meet it?

- What is the monthly cost of running on-prem and cloud in parallel if the migration takes twice as long as projected?

- Has anyone on your team delivered a hybrid migration end-to-end, or are you relying on adjacent cloud experience?

- Does your team have a plan for operating two environments permanently, or is the focus entirely on the migration phase?

- Do your vendor or internal team's responsibilities include implementation through to production, or do they stop at strategy and handoff?

- What does success look like in 90 days?

- What is the total cost of a failed first attempt, including rework, team morale, stakeholder trust, and the eventual cost of outside help, compared to engaging the right team from the start?

Get Your Hybrid Cloud Migration Right the First Time

Hybrid cloud migration compounds mistakes at every layer. Overspend from months of parallel running, missed compliance deadlines, and technical debt from a fragile first attempt all stack on top of each other, and they grow the longer the migration remains unresolved.

We've been on both sides of this. We've been the team called in after a stalled internal attempt, and we've been the team engaged from the start. The outcomes are dramatically different, and so is the cost.

Our engineers embed directly into your team and build alongside them. As an AWS Advanced Tier Partner and Kubernetes Certified Service Provider, we cover infrastructure, security, compliance, and cost optimization within a single engagement, so the expertise to get a hybrid migration right is present from the first decision through to the final cutover.

Planning a hybrid cloud migration? Talk to our engineers about your timeline, budget, and risk profile before you commit to an approach.