.webp)

It started with a reasonable question from a client:

“Why is this specific action slower in our test environment than in production? Test has way less traffic — shouldn’t it be faster?”

Lower traffic should mean less load, which should mean faster responses. In serverless architectures, that intuition can be misleading and can lead to wasted time figuring out why . Fortunately we figured it out in minutes, because we had the data we needed in a form we could actively explore.

That gap is where most serverless performance work actually lives. This article is about what’s happening underneath, why AWS Lambda cold starts get blamed for problems they didn’t cause, and when they genuinely are the bottleneck worth solving.

The term “cold start” collapses three distinct phases into one blob, which is part of why teams struggle to debug it. The Lambda execution lifecycle has three stages: INIT, INVOKE, and SHUTDOWN. The cold start is the INIT phase, and it has its own substructure.

One detail worth knowing: as of August 1, 2025, AWS bills the INIT phase for all Lambda functions, including ZIP-packaged managed runtimes that were previously free during initialization. This standardized how INIT is billed across configurations.

For most workloads, the billing impact is modest, but there are exceptions:

Monthly cost increases of 10–50% have been reported for these cases.

Back to the original client question: test was slower than production despite lower traffic.

In production, Lambda invocations are frequent enough that AWS keeps execution environments warm. A request arrives, there’s already a running environment, and the INIT phase is skipped entirely. In a test environment, requests arrive minutes or hours apart. AWS reaps idle environments - community benchmarks put this around 7–45 minutes depending on memory allocation, so each test invocation is more likely to be cold.

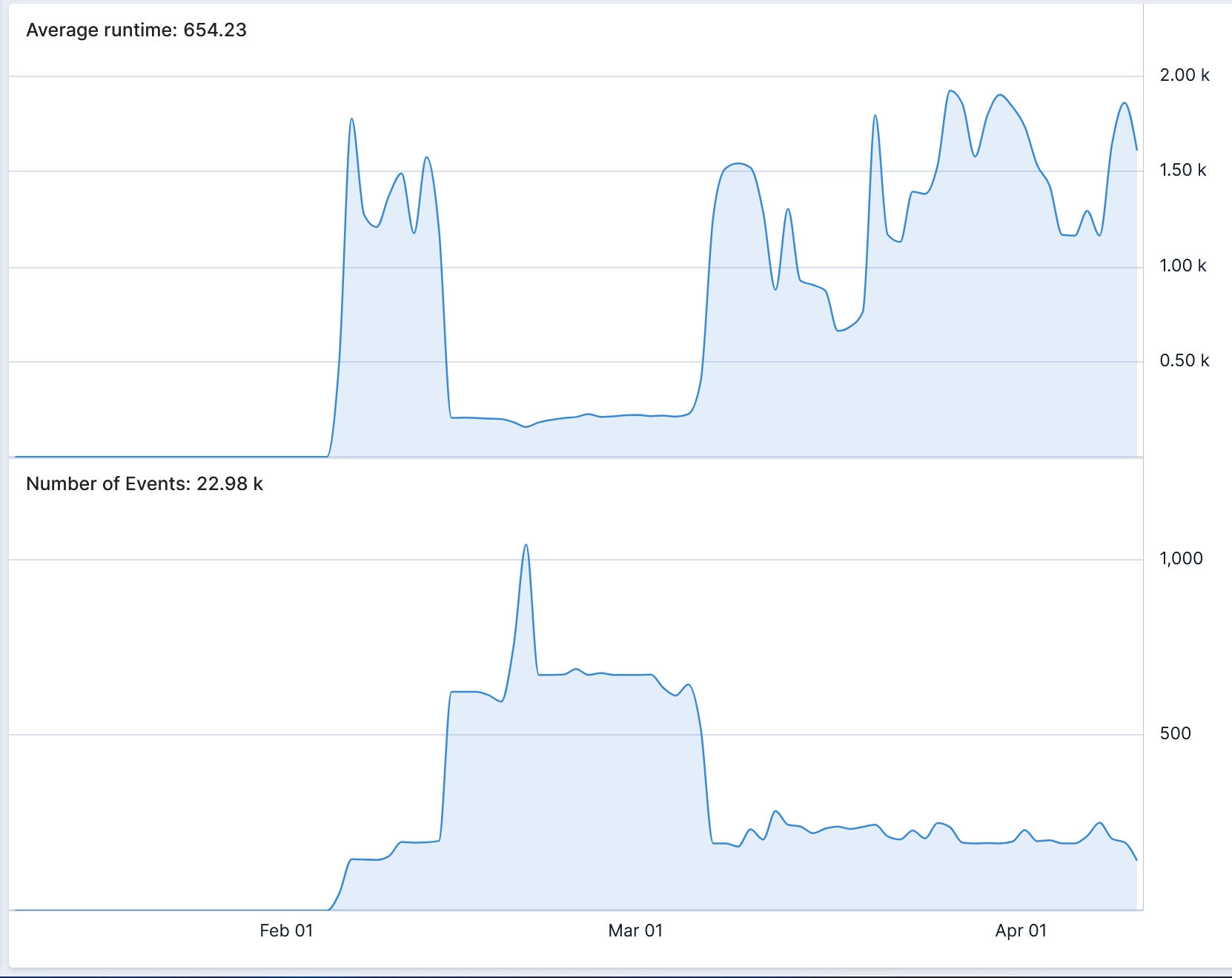

The counterintuitive consequence: lower traffic produces higher average latency. When we graphed average duration against invocations per minute on the same time axis, the inverse correlation was immediate: traffic up, runtime down; traffic down, runtime spikes. Cold start cost made visible.

This also explains why test and prod often diverge even when the code is identical. If production uses provisioned concurrency (keeping instances warm) and test uses on-demand, you’re comparing two different execution models and then being surprised they perform differently.

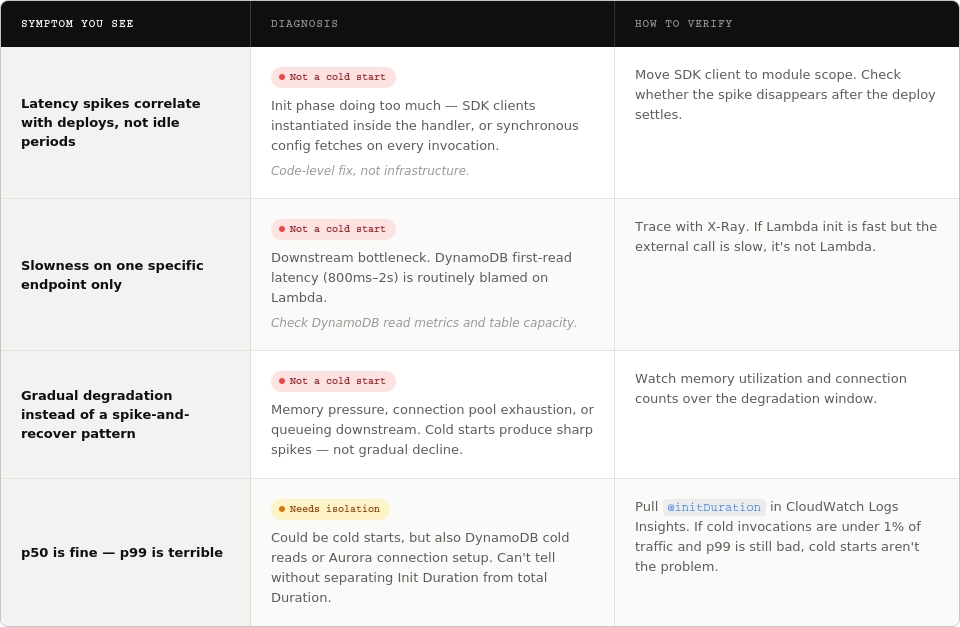

Before reaching for any cold-start fix, it’s important to note that it’s actually pretty easy to misinterpret certain symptoms as a cold start. Here's how to tell them apart:

You can verify your findings by pulling @initDuration in CloudWatch Logs Insights (present only on cold invocations) and comparing the cold invocation rate against your latency distribution. If cold invocations are under 1% of traffic and p99 is still bad, cold starts aren’t your problem.

CloudWatch gives you Duration, Invocations, and Errors out of the box. What it doesn’t give you by default is a clean view of cold start rate over time, or the correlation between invocation frequency and average latency.

A starting query in CloudWatch Logs Insights:

filter @type = "REPORT"

| stats count() as invocations,

count(@initDuration) as coldStarts,

avg(@duration) as avgDuration,

avg(@initDuration) as avgInitDuration

by bin(5m)

This gives you the cold start rate and average INIT duration per 5-minute bucket. Graph it against invocation count and the pattern from the opening story emerges immediately.

The deeper capability isn’t running one prebuilt query; it’s being able to take a vague hunch (“test feels slow, maybe cold starts?”) and get to a visual answer fast enough that exploration stays cheap. That’s what operational visibility actually buys you: faster answers to the questions that matter.

Once you’ve confirmed cold starts are the dominant cause, the fixes are split by cost, complexity, and where the bottleneck lives in the INIT phase.

These solve the init-phase portion, which is usually the largest component you can actually influence.

Two features target the cold start directly. They solve different problems and are mutually exclusive on the same function.

You might think you’ve “optimized” cold starts, but how would you know that it even worked? Here’s what you can do: .

If cold starts still dominate your latency budget after code changes, SnapStart, and provisioned concurrency, the honest answer may be that Lambda isn’t the right primitive for this workload. Alternatives:

This is a bigger conversation, but it’s worth having. The best fix to a cold start is sometimes not being on Lambda.

Cold starts aren’t a hard problem. Knowing whether they’re your problem is.

Most customers we work with have spent weeks on cold-start optimization when the actual bottleneck was two services downstream.

The faster you can get from question to visualization, the faster your team stops firefighting and starts understanding.